Advanced AI - Mechanics & Control

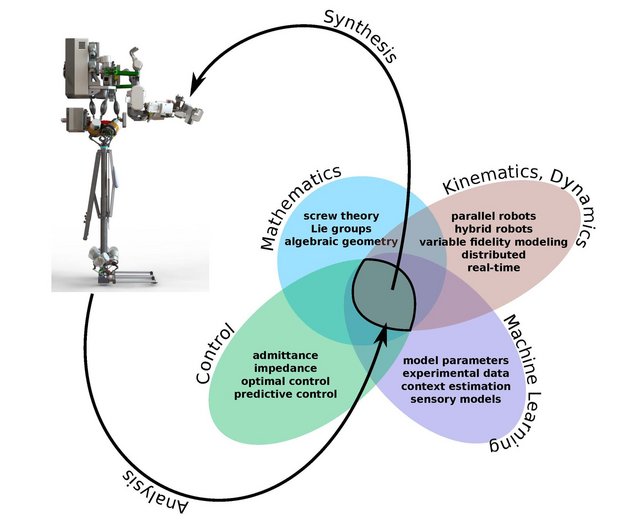

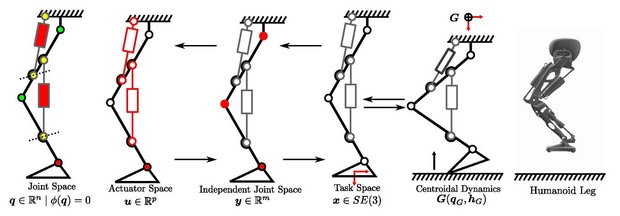

The team “Mechanics & Control” looks at the fundamentals of mechanics and motion control. In the area of mechanics, the team is interested in problems related to geometry, kinematics and dynamics of complex robotic systems under various constraints. Of equal interest are the analysis and synthesis of such problems. This entails the position, velocity, acceleration and force analysis of mechanisms, while synthesis deals with the optimization of design parameters of such mechanisms in order to achieve the desired kinematic and dynamic behavior. Further, the team aims for inherently compliant and elastic robots thus modelling joint and link flexibilities to enable accurate control. Lastly, it is interested in approaches for model simplification and variable precision modeling for different applications.

In the area of motion control, the focus is on two aspects: prediction and optimization. One focus area is the learning and the application of dynamic and sensory models in order to predict movement data. Another focus is the use of robot optimal control from which relevant cost functions are derived that provide optimal solutions for complex robotic systems, including under-actuated and highly redundant systems. In addition, the team is interested in probabilistic modeling to estimate the motion contexts, and in the learning of inverse control models that take into account biological principles, such as Feedback-Error Learning.

Team lead: Dr.-Ing. Dennis Mronga

Deputy: Dr. Melya Boukheddimi